The ongoing research project “Exploring the Pandemic Impact on Congregations” (EPIC), part of Hartford Institute for Religion Research, has released a new report titled “Signs of Rebound Amid Uneven Recovery.” The results are cautiously optimistic. Key findings, summarized on their website, include the following:

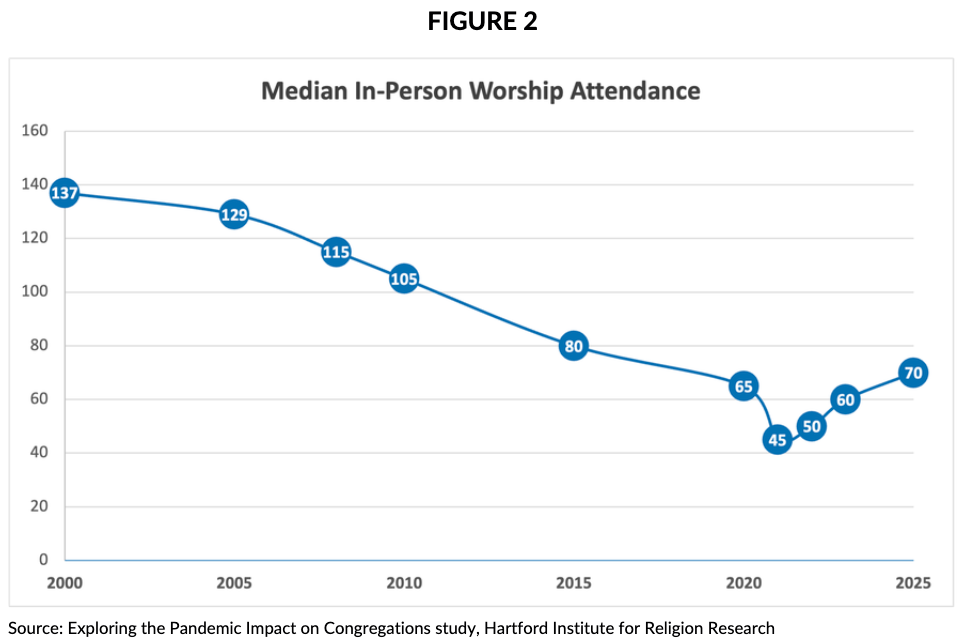

- “Median in-person worship attendance has risen to 70 — surpassing pre-pandemic levels and marking the first positive gain in 25 years of tracking

- “Median congregational income reached $205,000 in 2025, well above inflation-adjusted expectations

- “Volunteer participation has rebounded to pre-pandemic levels, with 40% of congregants now volunteering regularly

- “58% of congregational leaders say their congregation is stronger now than before the pandemic

- “Clergy well-being has improved across physical, mental, spiritual, relational, and financial dimensions”

The new report makes me cautiously optimistic about the state of organized religion. The report also confirms anecdotal evidence that at least some congregations are experiencing a bit of growth. Growth, that is, compared to the past few years; 2025 median worship attendance (traditionally one of the best measures of growth) was slightly higher than what it was before the pandemic, and this graph from the report shows:

The report includes data from many different denominations. Figures for Unitarian Universalism may possibly vary somewhat from the nationwide norm — e.g., from what I remember of the data collected by the Unitarian Universalist Association (UUA), we supposedly hit our peak attendance in about 2005 and thus started declining a bit later than the national average.

On the other hand, attendance data reported by the UUA depends on figures reported by local Unitarian Universalist (UU) congregations, and from what I’ve seen, many local UU congregations are not good at collecting data on average attendance. I’d place far more trust in the data collected by Hartford Institute for Religion Research — just remember as you read their report that Unitarian Universalists fit neatly into the sociological category of “Mainline” congregations.

I’m only cautiously optimistic, because the trends outlined in Robert Putnam’s book Bowling Alone continue — most Americans no longer want to participate in voluntary associations. And in spite of all the chatter about how isolated people are feeling these days, it remains hard to convince most Americans that showing up at a values-based community once a week might help reduce their feelings of isolation. If we could just convince people of that, then we might see a more robust increase in weekly attendance.